One of the issues that folk sometimes want to check is the effectiveness of their overdue letters: that is what proportion of items are returned following an overdue letter. There are several ways to approach this. Here is one.

Overview

The report is based on the assumption that any one LOAN_ID relates to a single issue, multiple optional renew transactions and a single discharge. There are two queries. The first query returns all the Overdue letters in a date range, including the LOAN_ID. The second query returns loan transactions that meet two criteria:

- They must be discharges

- The LOAN_ID must be associated with a letter in the same date range

The two queries are then merged. Wherever the second query found something, the loan was discharged: i.e. the Overdue letter was “successful”

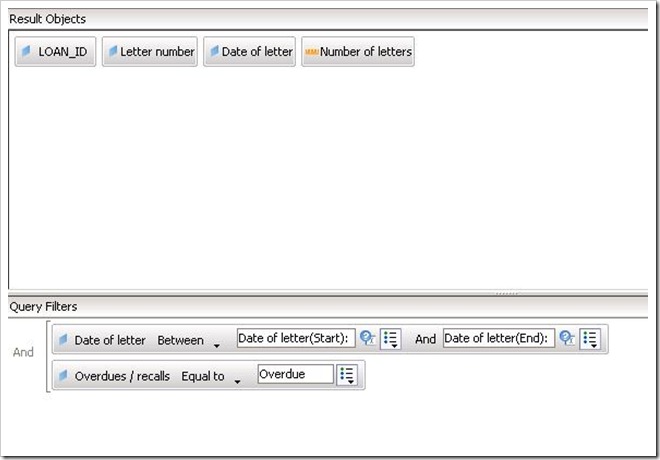

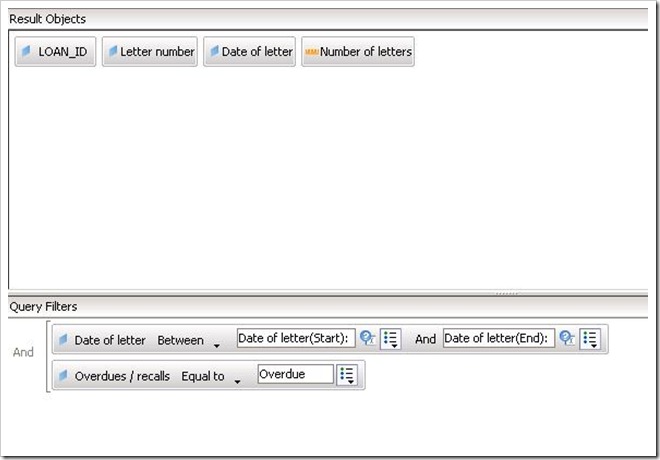

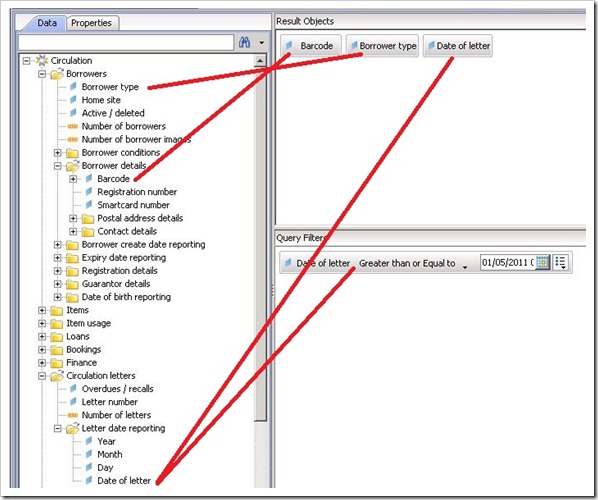

Query 1 – the Overdue Letters

This returns the LOAN_IDs in a date range. The Letter number and Date of letter are additional “nice-to-haves”

You will note that the letter dates are set up as prompts in this example. These dates can be selected directly instead, although this means modifying the report whenever it is run.

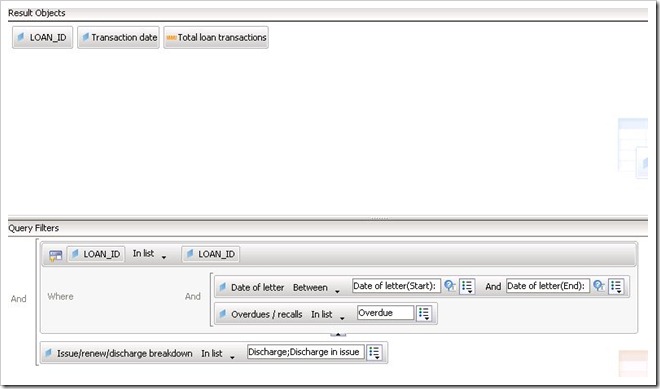

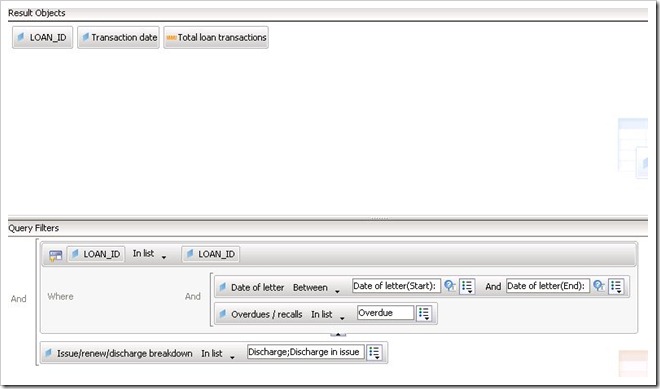

Query 2 – The Loans

This returns relatively little data. Because it is filtered to return discharges only, if a LOAN_ID is found, then we know that that loan was discharged.

The filter uses a sub query. What this says in effect is “go away and find the LOAN_IDs where there was an overdue letter in the date range, then use this list of LOAN_IDs to search for discharges”.

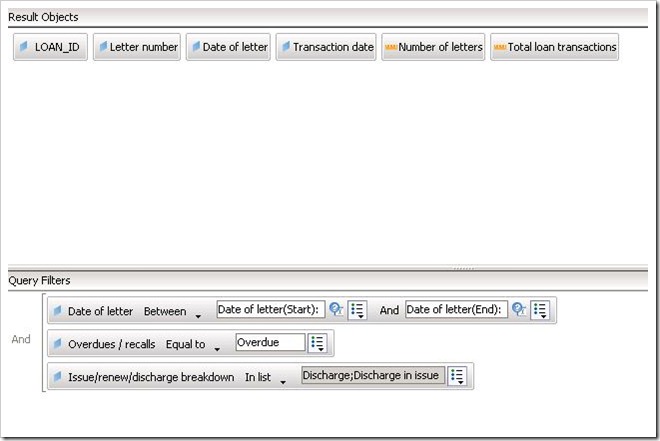

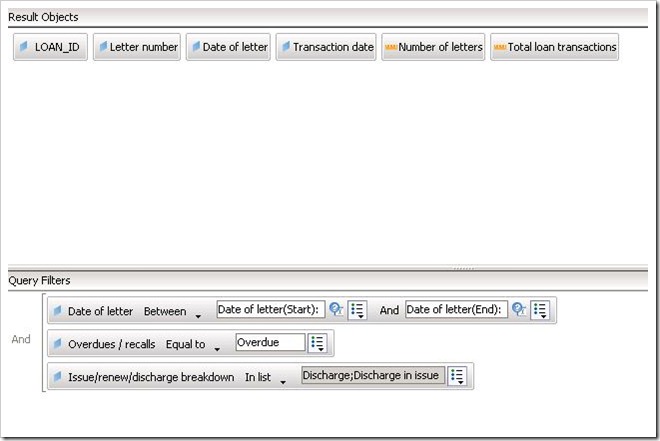

Why not use one query?

On the face of it, it looks like it should be possible to put everything into one query like this:

There are two problems with this.

- The information about letters and the information about loans are in different parts of the database and Decisions must generate some fairly complex SQL to return the data

- Even if Decisions is successful at returning any data at all this won’t return any data about letters where the item was not discharged: i.e. this query would at best only return “successful” letters not unsuccessful ones

So whilst two queries and a sub query looks complex it is actually the best way to get the data that you want.

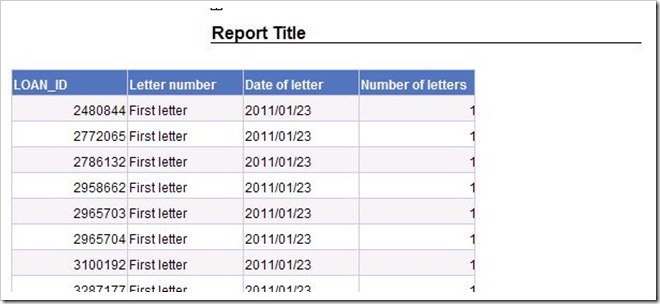

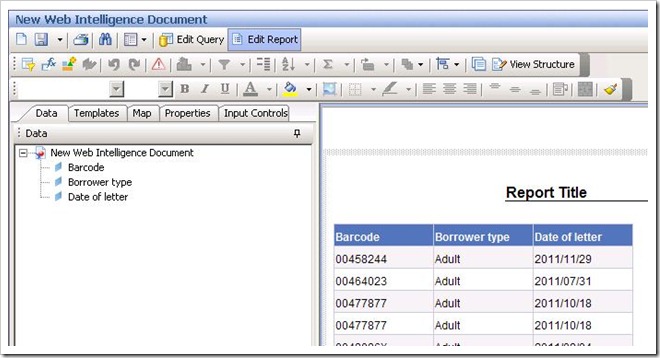

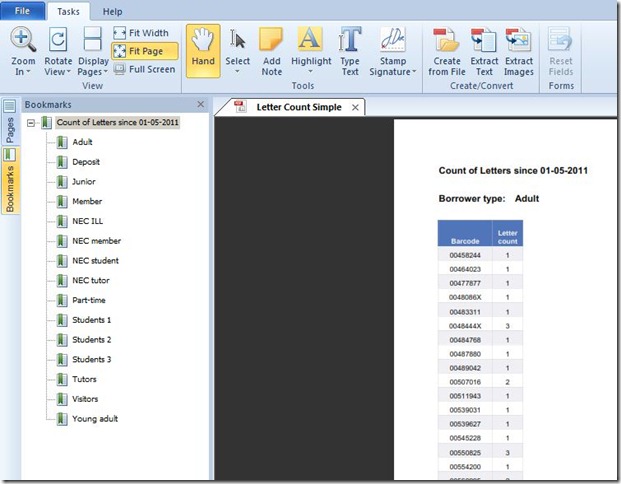

In the Report

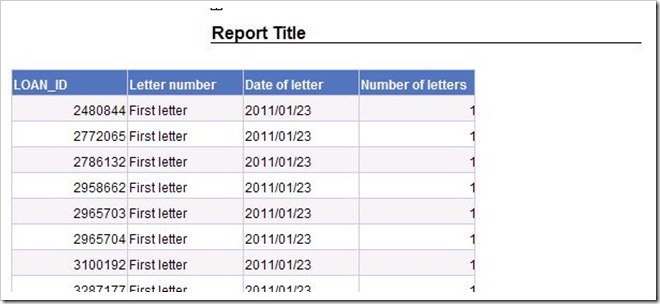

There are many ways to use the data in a report. By default you will get two tables. The first contains all the stuff about letters…

…

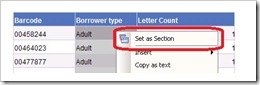

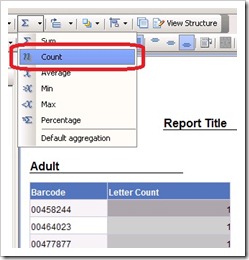

and the second contains all the loan data. I started by deleting this second (loan data) table completely.

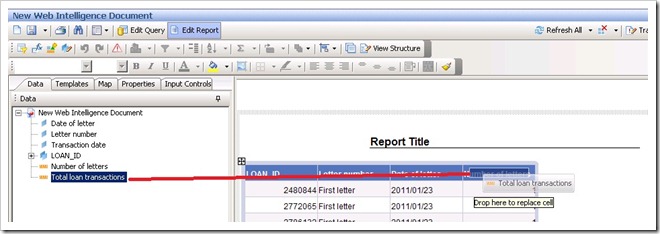

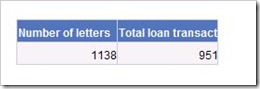

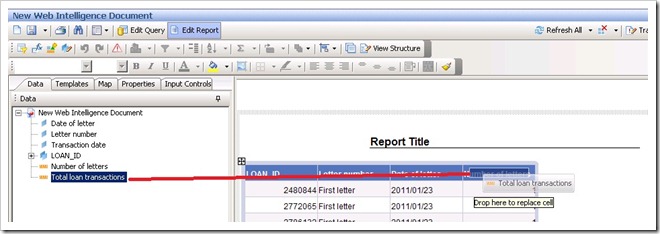

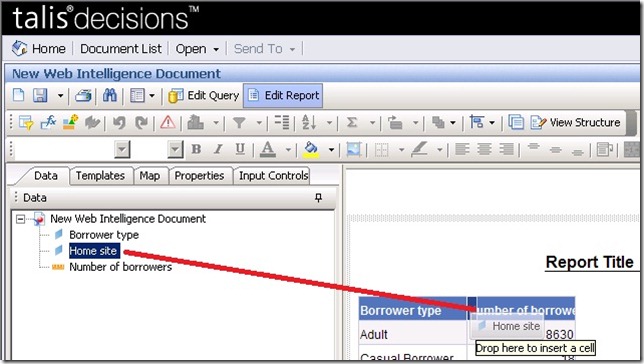

Next, drag Total Loan Transactions onto the Number of letters column and let go. This overwrites the column:

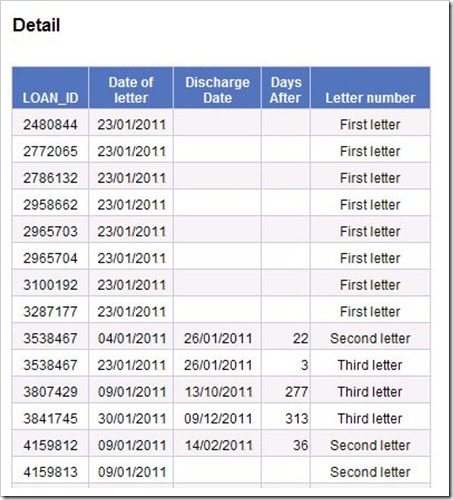

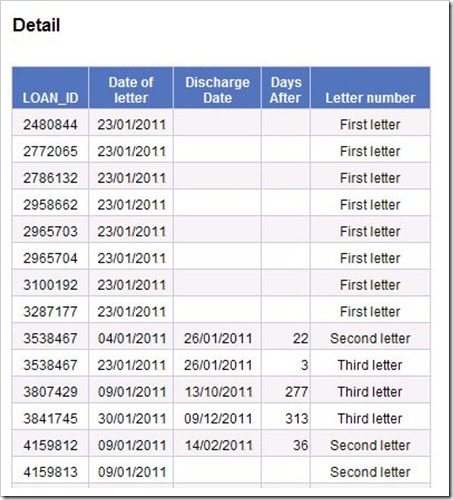

You now have the detailed data that you need. Wherever there is a 1 in the last column, that book was returned.

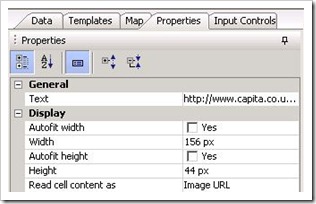

If you want to add the date that it was returned to the table you will find that you cannot do so directly. In theory there could be many different transaction dates for a single LOAN_ID (Issue, Renew etc) and Decisions doesn’t know what you do: that there should be just one discharge transaction for every LOAN_ID.

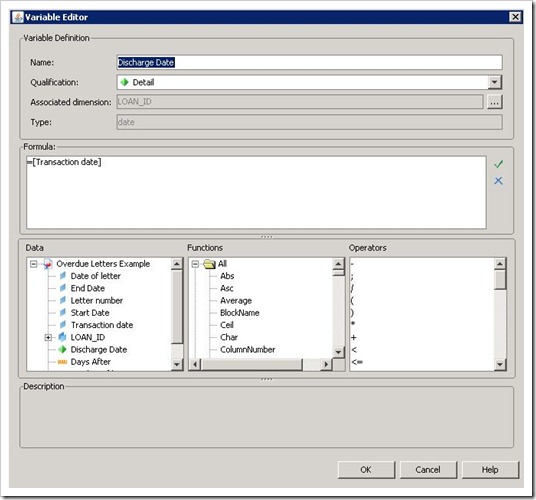

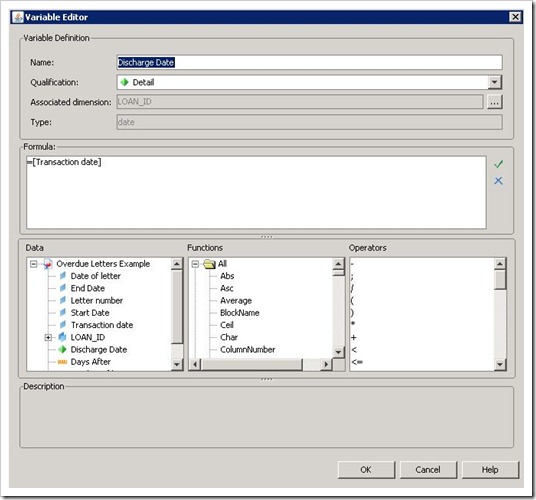

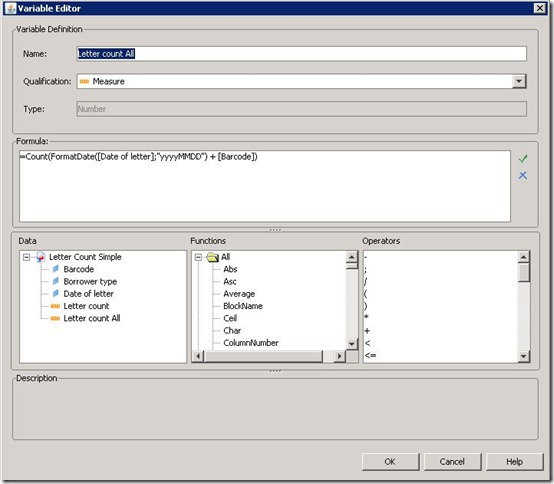

You know however, and there is a way of picking Decisions up by the neck and growling “read my lips”. Create a variable (I named it Discharge Date), set it equal to Transaction date and make it a detail of LOAN_ID. This tells Decisions that there will be just one (or zero) transaction dates for any one LOAN_ID. It will then let you add it to the table.

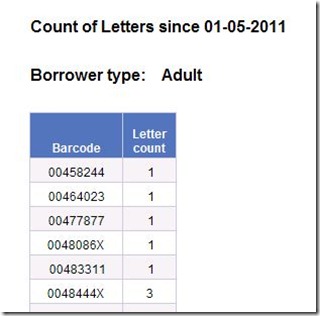

I created a second variable that used the DaysBetween() function to work out how long after the letter date the item was discharged. With some further tidying up and formatting the result looked like this:

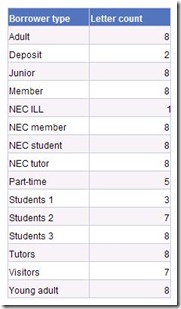

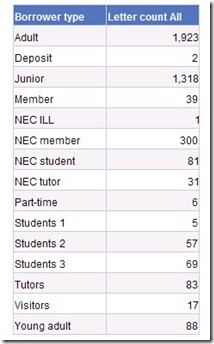

A summary

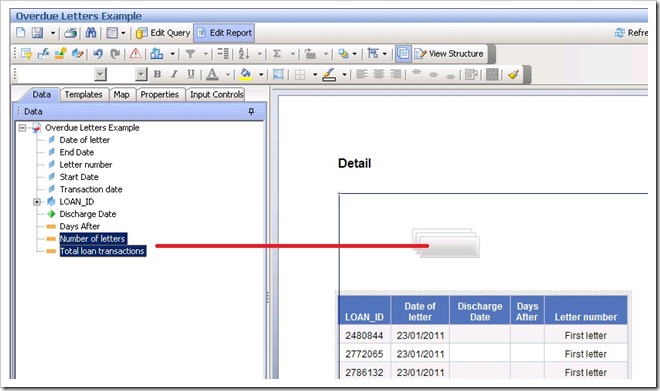

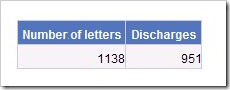

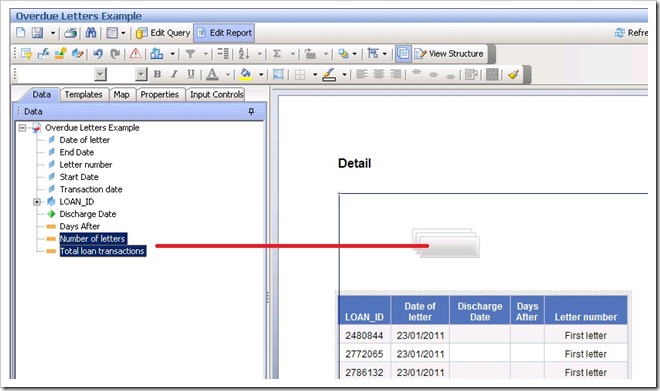

A final touch is to add a summary table. Add a new report tab or drag the main table down a bit and use the space created. Select (CTRL-click), drag and drop Total Loan Transactions and Number of letters into the report.

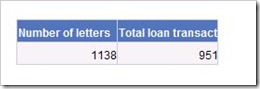

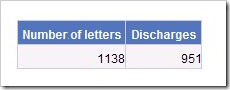

This creates a simple four-cell table containing the gross numbers:

You can if you wish rename the headings to something more sensible and/or tidy up alignments to produce something like this:

If you try this (successfully or unsuccessfully) I’d be most interested to hear how you got on: please do leave a comment

Recent Comments